We have been chatting about how the technological movement known as “big data” is at its tipping point and it seems that nothing is going to stop it.

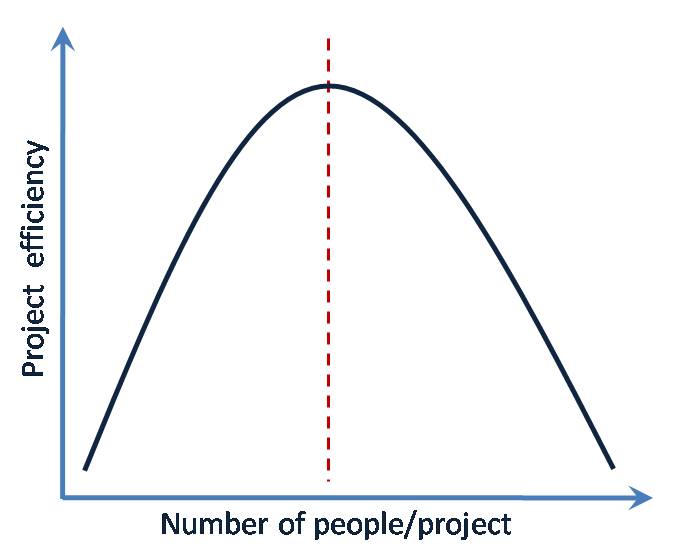

But I have noticed something. Big data leads to complexity. Expensive complexity. And it’s not just the technological infrastructure that’s pricey. Most organizations budget for that. No, it’s the stable of data jockeys that’s necessary to adequately leverage the benefits of the technology.

Too often state of the art data technology is woefully underutilized because the organization can’t find or afford to adequately staff it.

Earlier in my career, I developed a response model that regularly lifted net results by 15%. It wasn’t a particularly complex model – in my mind anyway. But the model was a complete flop in execution because the clients didn’t have the staff or tools to score the model. The process of applying the model simply proved to be too complex. Invariably, when drop dates were in jeopardy, the decision was made to “pull the data using the old RFM way.”

And the lesson I learned is that sometimes simple is better, even though it may not yield the “best returns.”

Stay simple my friends.